Guess I will just go ahead and publish the rough draft below written in November 2023, since I cannot face long edit sessions at this point:

When I attended the University of Texas after graduating high school in 1971, I majored in psychology and sociology (but returned to school years later to study electrical engineering after some time playing lead guitar in rock bands). I enjoyed both fields even in these introductory contexts, coming to the attention of graduate staff in the psychology department when I handed in a well-written report on a preliminary experiment in extrasensory perception I conducted and being invited to tour the sociology department by my professor in that course after I turned in a piece of work where I outlined how I would recommend going about making a revolutionary transformation of a given society.

In the parapsychology experiement I observed greater than chance results in a test for increased accuracy on sends of Rhine cards (someone has since decided to call them Zener cards, our present age offering not much other than pointless renaming and pursuit of the trivial) between heterosexually involved pairs of subjects compared with merely acquaintances. The psychology graduate TA (teaching assistant) showed me around the psychology department, including his work with rattlesnake learning (my immediate question was, "what can they learn?" his reply was an apologetic, "Not much..."). I recall fondly my initial assessment of my experimental results, when, though I had experienced psi phenomena all of my life (infrequently I suppose else I would have been even more distracted) the couples results went off the charts on card transmission (could have been general psi also), my hair standing on end (which was significant, since my hair was shoulder-length at the time) and I breaking off to go get a pitcher of cold beer at a friendly local pub (I think it was physicist Leonard Susskind who recounted needing to drink a few beers after he found that it was more convenient to think of particles as loops of string in 1969; I suggest that you are much more likely to experience ESP than to see any actual useful physics emerge from string theory).

But back to the sociology group, in 1971 I had simply used what I had been taught (in the sociology class as well as my eclectic studies in anthropology and evolution among other areas that had interested me while I had largely ignored my high school curriculum) about how norms are transmitted to individuals in a particular society to outline a process where you might have the best chance of breaking those norms and replacing them with new, say revolutionary concepts a la Mao. I had discussed separating family members, moving people from their usual work environments in cities or universities into camps where they would have the usual feedback stripped and could be trained as it were on new concepts of what was proper behavior, proper ways of viewing the world in human social terms.

Anyway, my sociology professor had liked my paper very much and wanted to meet me, though the idea of me pursuing a career in sociology was not attractive to me. I was later shocked to discover that the Khmer Rouge implemented about what I had suggested in 1975 after capturing Phnom Penh. I hope it was a coincidence, or that will have to be added to the negative side of the scale when Anubis weighs my heart in the afterworld. As an aside, if you look at the album cover for the Out of Time album,

you will see that I placed the 1955 Les Paul I played on many of the tracks on that scale (cooked up an image of the 1240 B.C. Papyrus of Ani, i.e., Book of the Dead for scribe Ani).

My view of the reality of the Israel situation now in regard to Hamas and the Gaza is compatible with what Kevin D. Williamson writes:

The great test for Israel is not restraint at all, but diligence in its national pursuit of the actual moral imperative in front of it, which is the annihilation of Hamas...offering the Palestinians land for peace was a well-intentioned folly; that there is not going to be a two-state solution, because the only state the Palestinians are interested in building is an Arab version of the Taliban regime in Afghanistan and the only solution they are interested in is Heinrich Himmler’s final one.

This kind of talk is anathema to the "left" I defined earlier, but those who are not shielded for the moment (in an increasingly debilitated America--I really do not know why the totalitarian nations and pseudo-religious psychopaths of Islam do not simply wait a few more years and then easily dispose of a once-great nation when its weapons and armed forces are no longer capable of actually fighting) would disappear from the field of evolution immediately were they to combat an enemy ready and willing to kill or be killed.

I use the terms "stupid" or "idiot" frequently when reacting to the appalling state of America. I should note that I am not really addressing the capacity to reason (one's innate capacity is hardly one's own responsibility, i.e., every man has something to offer and deserves a priori dignity and respect, whatever talents and abilities he is born with), but more the inability of childish personalities to use whatever reasoning capacity they possess due to emotional motivations for particular viewpoints (you are responsible for what use you make of the tools you are given).

I may be wrong in being so annoyed with these types to the extent they may have no conscious control over the phenomena (perhaps I should coin a new term for this, e.g., "constitutional stupidity" or "helplessly foolish"). However, it is impossible to adequately describe my revulsion for those who embrace their evil behavior. I am reminded of the response of the crowd in 33 AD who ignored the Roman governor of Judaea (Pilot) attempts to persuade them to select Jesus as one of the prisoners to be released (Pilot finding no fault with Jesus), the crowd continuing to demand the release of another prisoner instead and asserting that Jesus should be crucified, adding insolently that the responsibility should be on them and their children (Matthew 27, KJV). My own interaction with such folks makes it clear that most of them are aware of their reprehensible behavior and simply have no shame. They are the evil brood that persists in all populations to this day, those that reject those sent to guide and lead them and instead worship the Father of Lies (if you look around today you should be able to see that this is not a myth or metaphor; Baudelaire commented aptly in The Generous Player that the cleverest ruse of the Devil is to persuade you he does not exist) by their choices and actions. Matthew 23:37 and thereabouts describes the reaction of Jesus to such behavior, i.e., even for the spiritually advanced there are limits to what can be tolerated. One should show mercy wherever one can, but there can be no forgiveness for those who celebrate their wrong and persist in it.

My motive is not to make anyone feel bad about who they are, but rather to persuade them to feel shame and reform, if they have any capacity to do either. Who am I to make such observations or demands? Let me be nobody (I never really understood the idea of "wanting to be somebody"--only an idiot would accept what somebody did or said as good or commendable because of their feeling about the physical presentation of the person...oh, I forgot, that is exactly where this rotting culture is today). Let the things I say speak for themselves, to the extent the hearer is capable of desiring to find the truth and right way (that is of course what Jesus meant by frequently advising "he who has ears to hear, let him hear"). I am not a man of doctrine, i.e., I merely quote what is useful. I certainly have no appetite for crucifixion or the general "rewards" of the would-be prophet in this life, and to say I have not kept myself unspotted by the world (see book of James, one of my favorite books of the Bible) would be an understatement.

What have I done lately, i.e., have I been at all useful? Well, paraphrasing the TA comment I related earlier about the lack of academic capability in pit vipers, "not that much." I am increasingly worn-out it appears, finding it difficult to write (if you have stamina, you could scan over the last 5 years or so of my blogging up here and see a steady decline in quality and quantity). That being said, I occasionally am able to study and contribute in some small way: In September (2023) I became interested in a promising new antimicrobial, teixobactin and researched the subject of antibiotic discovery and development, eventually publishing online a clear account of the status of teixobactin at this time. In the process of that investigation I was pleased to communicate with several researchers in the field (privately), the pleasure being both in new understanding as well as in finding there were still humans out there that I could communicate with (instead of this kind of instant recognition by one another of more advanced individuals you usually find these days suspicion the moment one's words deviate from twitter vocabulary or Family Feud string matches, otherwise known as "AI" or "artificial idiocy").

November 27, 2023 some crackpot shot three men of Palestinian descent in Vermont and was immediately arrested. There is tremendous media coverage of the incident because of the desperation of the left to find some path to a false equivalency between Israeli action to eliminate Hamas in Gaza (note that the mainstream media continues to slip in mischaracterizations of IDF actions there as "retaliation" or "removing Hamas from power" or the like). Undoubtedly the shooter simply was a lower-level human fed on a diet of Faux News and social media feeds designed to keep such folks in a state similar to that of an irritated two-year old who possessed a gun (awkward to place the qualifier phrase here, but it seems to work either way). He simply flipped out when he saw the men dressed like the people he had been trained to see as enemies. What is the point of wondering whether this was a "hate crime?" One might suspect that pulling on a target in otherwise innocuous context involved some degree of dislike, grin. It is yet another indication of the perverse rot at the heart of America (and Western civilization such as remains after our global influence acted for decades) that criminal actions have to be recast in some kind of politically correct agenda. Life on Earth, nature red in tooth and claw, the process of evolution requiring only that those who survive and leave more offspring to do the same win, demands that challenges to survival be met with all available focus. "Hate" is simply a later development (limbic system) of that natural ability of competitive biology. I don't know at this point how you repair the American educational system, which has this wierd agenda of political correctness wired in at every level. It is lamentable that there is apparently not a choice available between fascism and pervert-political-correctness (I would be available as that third choice, but I am long past my expiration date and not being seen on Entertainment Tonight as a generally appealing appearance with vacuous personality).

What hope is there for a world where statistically matched pieces of sentences is offered as evidence of artificial "intelligence" and believed, with breathless (if mindless) wonder? The subject of genius, which those of us who are still capable of thinking associate with production of new understanding or new works in a significant area (I am not overly impressed with a new basket design or a different way to stack rocks up and make a crude pyramid), is not well understood by many. An Anglo-Irish polymath, John Pentland Mahaffy (1839-1919), opined that:

...genius is original ... it strikes out new ideas, new solutions of problems, new lines of research, while the average man can only learn what others have already discovered for him. But a deeper and more careful inquiry reveals to us that absolutely new ideas are of the very rarest occurrence; almost the whole work of human genius consists in assimilating what others have thought, in combining what others have imagined separate, in recasting the form of their thought, and so producing what seems a perfectly new thing, and yet is only the old under a new aspect. No instance of this is more signal than that of a great composer in music. The gift of original melody, as it is called, is rare and precious. The possessor of it is justly considered a genius. But no melody could possibly speak to us except a combination of perfectly well known elements. The only originality is in their assimilation and reproduction.

While Mahaffy was an intellectual, i.e., he was capable of self-learning, understanding the work of others and writing intelligently about such work (as is, or was, much of my own work), he was not a genius, nor a notable composer. You can see that immediately (if you have eyes to read as it were) in the passage if you have any knowledge of the history of physics or experience composing music or appreciating it. Men who have actually created new knowledge know that it is a deep mystery how mentality comes to exist in or around the physical structures of brains and how that human mind occasionally perceives, for example, mathematical truth for which there is no obvious connection to experience (see Nobel Prize laureate Roger Penrose writings, and ignore the piss ants who desperately attempt to downplay his observations, those being in direct contradiction to the materialist agenda of those who now infest academia and the media). I would add that an original melody, phrasing in music that instantly resonates in the mind and invokes emotion, certainly relies on the scales and formats of the musical system, but the originality means precisely that the notes fall in a way not heard previously. While it is possible that a computer run long enough might occasionally toss together such a string, it would be buried among mountains of garbage and worse, there is no conscious entity there (unless a human is observing) to evaluate any of it!

That ends the November portion. I will add that in December 2023 I became interested in radio propagation in the UHF range after losing one of my local OTA (Over the Air) DTV (Digital Television) channels. I held an FCC General Radiotelephone license (originally obtained a First Class license 1978, but in the five years before I renewed it in 1983 they stopped differentiating between First and Second class licenses, presumably because few applicants could pass the more difficult First Class battery of examinations) up until 1988 but had not done any radio-related work really since experimenting with amateur radio in 1978 (required a different license and passing Morse code comprehension test, send/receive). After the broadcasters restored my local translator for the channel (I had an interesting thread of communication with their engineers, helping them by sending a telephoto shot I took with an old Canon SX400 IS camera from my window showing the their translating transmitter antenna 9.7 miles distant, which of course established that I had a line of sight path) I continued to look at the subject off and on (necessarily intermittent work since, as I continue to whine about, I am only able to work a few hours every few days at this point before sinking into CFS fog).

In December 2023 a foreign radio engineer graciously let me work with some C (a programming language developed at Bell Laboratories, C being "devised in the early 1970s as a system implementation language for the nascent Unix operating system," quoting from Dennis M. Ritchie's 1993 paper, The Development of the C Language) code for a program he had written some years ago, a program to use SRTM (Shuttle Radar Topography Mission) DTED (Digital Terrain Elevation Data, a matrix of terrain elevation values, see mil spec MIL-PRF-89020B) and the ITM or irregular terrain model of radio propagation for frequencies between 20 MHz and 20 GHz. The ITM is based on the Longley-Rice model, which used electromagnetic theory and statistical analyses of both terrain features and radio measurements to predict the median attenuation of a radio signal as a function of distance and the variability of the signal in time and in space (quoting from NTIA REPORT 82-100). I compiled that code and ran some tests.

I then compiled and worked with John A. Magliacane's SPLAT! v1.4.2 terrestrial RF (radio frequency) path and terrain analysis tool for Unix/Linux, a program written in C++ (a more complex version of the C language). I assume some readers will realize by now that I normally work in a Linux environment, rather than Microsoft Windows. SPLAT! is based on ITM also, but adds Sid Shumate's Irregular Terrain With Obstructions Model (ITWOM) work, which replaces and supplements terrain diffraction calculations in the line-of-sight range and near obstructions, with Radiative Transfer Engine (RTE) functions.

In the LOS (line of sight) path you run into problems using two-ray ground reflection models because the radio reflecting characteristic of the intervening Earth surface is not accurately given using only elevation data (e.g., maybe bushes, houses, etc. are present and scatter the electromagnetic radiation rather than reflect it as a specular or mirror surface would). ITWOM seeks to mitigate this somewhat, though comparisons against actual field measurements don't really provide a clear winner among the various programs and models available. Radio engineering is a black art really and the most you can hope for is to obtain approximate propagation results from which to begin if planning a broadcast installation or trying to determine where an observed problem lies. Anyway, here is an example plot from SPLAT! showing the path profile between one of my local broadcast transmitters and my receiver:

The SPLAT! report on the RF between my broadcast transmitter and my receiver:

ITWOM Version 3.0 Parameters Used In This Analysis:

Earth's Dielectric Constant: 15.000

Earth's Conductivity: 0.005 Siemens/meter

Atmospheric Bending Constant (N-units): 301.000 ppm

Frequency: 473.000 MHz

Radio Climate: 5 (Continental Temperate)

Polarization: 1 (Vertical)

Fraction of Situations: 70.0%

Fraction of Time: 50.0%

Transmitter ERP: 1000 Watts (+60.00 dBm)

Transmitter EIRP: 1637 Watts (+62.14 dBm)

and

Summary For The Link Between KCWFLDtx and GDBorganRX:

Free space path loss: 109.83 dB

ITWOM Version 3.0 path loss: 138.39 dB

Attenuation due to terrain shielding: 28.56 dB

Field strength at GDBorganRX: 54.51 dBuV/meter

Signal power level at GDBorganRX: -76.25 dBm

Signal power density at GDBorganRX: -91.28 dBW per square meter

Voltage across a 50 ohm dipole at GDBorganRX: 44.06 uV (32.88 dBuV)

Voltage across a 75 ohm dipole at GDBorganRX: 53.97 uV (34.64 dBuV)

Mode of propagation: Line of Sight

ITWOM error number: 0 (No error)

That SPLAT! output above compares well with my earlier C code result of 139.33 dB total path loss (to obtain that result I switched off that program's use of a two-ray reflection model). Both programs agree on the FSL (free space loss, i.e., amount of radio signal strength decay calculated in line of sight by purely deterministic electromagnetic equations without considering multipath ray reflections, over the horizon diffraction etc.) of 109.77 dB. They differ on the additional attenuation due to ground effects and the like, but not by a lot.

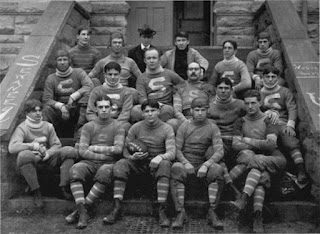

I should note that after writing about the 1899 Sewanee Tigers football team up here in September 2023, I read a book about Merrill’s Marauders (Gary Bjorge's Merrill's Marauders: Combined Operations In Northern Burma In 1944). The Marauders were a US Army long range penetration special operations jungle warfare unit (Merrill’s Marauders is the popular name given to the 5307th Composite Unit also known by its code name Galahad) fighting in the Southeast Asian theater of World War II. The unit became famous for its deep-penetration missions behind Japanese lines, often engaging Japanese forces superior in number, quoting Wikipedia. The reason I mention this unit was that their story was another appraisal of the capacity for some humans to persist in the context of unrelenting physical exhaustion and injury. Fatigue, disease, malnourishment and unrelenting battle reduced the original unit's 2nd Battalion, with 27 officers and 537 men, to about 12 men still in action, with the situation for the 3d Battalion similar. The 1st Battalion still had a handful of officers and 200 men, but on 10 August 1944 the unit was disbanded, because as campaign commander, Lieutenant General Joseph W. Stilwell, put it "[the unit] is just shot." If you are confused by the organizational references, here is an organization chart of the unit:

Bjorge concluded that as the only American combat unit within the combined force in the northern Burma campaign, the Marauders could not avoid being given special burdens that came from being Americans. Their presence was required to form viable multinational task forces when the units of other countries could not or would not work together alone. The guys on the ground paid the price, as usual.